Infibee Technologies provides India’s No.1 Hadoop Training with global certification and 100% placement Support.

Kickstart your career in the Hadoop Course guided by 12+ years of industry-experienced trainers, affordable Hadoop Course fees, includes hands-on mock projects, resume preparation, interview preparation, and dedicated placement training. Learners also benefit from lifetime access to recorded sessions of live classes, ensuring continuous learning and revision at their convenience.

Join our Hadoop Training Institute and ignite your career with opportunities for high-paying jobs in top companies.

Live Online :

Infibee’s Hadoop Course provides students with comprehensive training about big data processing and distributed computing and scalable data solutions. This course offers the ideal combination of academic theories and practical work experience for students who want to find Hadoop training programs and reliable Hadoop training institutes at their location. The organizations use Hadoop as their primary framework because it enables them to handle and study extensive data sets which creates a high demand for this skill in the current data-centric environment.

The Hadoop Training Institute teaches students and working professionals and career changers to develop advanced skills in Hadoop ecosystem tools which include HDFS and MapReduce and Hive and Spark and additional technologies. The expert trainers deliver educational content to learners through real-time projects and case studies and mock assignments which enable students to gain practical knowledge of the subject matter.

Infibee provides both physical classroom instruction and online educational programs to students who want to learn about Hadoop through their preferred learning method.

The Hadoop Training teaches essential concepts through its instruction of distributed storage, which uses HDFS, and its teaching of data processing with MapReduce and its instruction of querying through Hive and its data ingestion training with Sqoop and Flume and its real-time analytics instruction using Spark. The curriculum is designed to match current industry standards and job requirements.

| Hadoop Course Topics Covered | Applications of Hadoop Training | Tools Used |

|---|---|---|

| Hadoop Architecture & HDFS | Big Data Analytics | Hadoop |

| MapReduce Programming | Data Warehousing | Hive |

| Hive & Pig | Log Processing | Pig |

| Apache Spark | Real-Time Data Processing | Spark |

| Sqoop & Flume | Data Migration | Sqoop |

| YARN Resource Management | Machine Learning | Flume |

Choosing the right Hadoop Training Institute is essential for career growth. Infibee Technologies stands out as a top provider of Hadoop Classes due to its industry-oriented curriculum and expert trainers.

Infibee Technologies has earned recognition as the leading Hadoop Training Institute in India through its complete learning programs which meet industry requirements. Students who want to learn Hadoop can find training programs at Infibee which offer both classroom and online learning throughout major Indian cities. The institute delivers practical knowledge through its live projects which allow students to develop their ability to manage real-world big data challenges.

Infibee offers strong placement assistance which enables students to connect with top MNC companies. The school provides students with dedicated placement training which includes mock interviews and resume-building sessions so they can become job-ready. The trainers who work at this institution use their industry expertise to provide students with effective learning experiences.

The Hadoop Course certification provides students with global recognition of their big data technology expertise after they complete the program. The certification demonstrates practical skills of Hadoop tools and ecosystem components which improves job prospects for applicants.

TCS, Infosys, Wipro, Accenture, Capgemini, Cognizant

| S.No | Certification Code | Cost (INR) | Expiry |

|---|---|---|---|

| 1 | Cloudera CCA175 | ₹20,000 | 2 Years |

| 2 | Cloudera CCP Data Engineer | ₹30,000 | 2 Years |

| 3 | Hortonworks HDPCA | ₹18,000 | 2 Years |

| 4 | AWS Big Data Specialty | ₹25,000 | 3 Years |

| 5 | Google Professional Data Engineer | ₹20,000 | 2 Years |

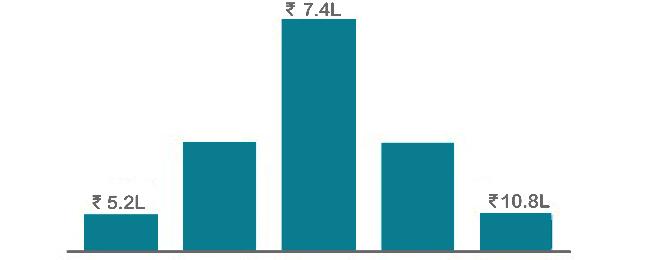

| Level | Job Role | Salary (LPA) |

|---|---|---|

| Freshers (0–3 yrs) | Hadoop Developer Trainee | 3–5 LPA |

| Junior Big Data Engineer | 4–6 LPA | |

| Data Analyst (Hadoop) | 4–6 LPA | |

| Mid-Level (4–8 yrs) | Hadoop Developer | 6–10 LPA |

| Big Data Engineer | 8–12 LPA | |

| Data Engineer | 8–12 LPA | |

| Senior (9+ yrs) | Senior Big Data Architect | 15–25 LPA |

| Hadoop Consultant | 18–30 LPA | |

| Specialized Roles | Spark Developer | 10–18 LPA |

| Big Data Architect | 15–30 LPA |

Yes! Infibee Technologies offers Hadoop Training across major cities through online mode including:

With expert mentors, practical training, and placement support, Infibee Technologies remains the No.1 choice for Hadoop Online Course across India.

Step 1: Register for a Free Demo

Visit our website and fill out the inquiry form. Attend a free demo session to understand our Hadoop Classes and training approach.

Step 2: Select Your Training Mode

Choose between classroom, online, or corporate Hadoop Training. Select a batch timing that suits your schedule.

Step 3: Start Your Hadoop Journey

Begin your Hadoop Course with expert trainers. Work on real-time projects and prepare for certification and job placement.

Take the first step towards a successful Big Data career with Infibee Technologies. Whether you are searching for Hadoop Training Near Me, Hadoop Course Near Me, or the best Hadoop Training Institute, we provide everything you need to succeed. Join today and unlock high-paying career opportunities in top companies!

Upgrade Your Skills & Empower Yourself

Begin your journey into the world of big data with our Hadoop Course! Covering essential topics such as Hadoop Distributed File System (HDFS), MapReduce programming paradigm, and Hadoop ecosystem components like HBase, Hive, Pig, and Spark, this course is designed to equip you with the foundational knowledge and practical skills needed to excel in the field of big data analytics.

Enroll in our Hadoop Training, designed to offer top-tier instruction with a robust grounding in fundamental principles coupled with a hands-on approach. By immersing yourself in contemporary industry applications and scenarios, you will refine your abilities and acquire the proficiency to undertake real-world projects employing industry best practices.

By developing this Hadoop project, you will gain an understanding of the fundamentals of Hadoop architecture. You will discover how to retrieve the top 15 queries created over the previous 12 hours if you consider the scenario where a web server generates a log file containing a time label and question.

Large file management was a major design principle of Hadoop's Distributed File System (HDFS), as you would know if you had studied its architecture in detail. Reading through small files is a difficult process for HDFS since it requires a lot of searches and many trips between data nodes.

This is a well-known Kaggle competition to assess a recommendation system for music. The Million Song Dataset, made available by Columbia University's Lab for Recognition and Organisation of Speech and Audio, will be used by users. The dataset includes audio elements and metadata for one million popular and contemporary songs.

Educate your workforce with new skills to improve their performance and productivity.

The Hadoop Training is designed to equip participants with comprehensive skills and practical expertise in the field of big data analytics. The objectives of this training include mastering core Hadoop concepts, applying acquired skills through hands-on projects, fostering critical thinking abilities, and preparing participants to tackle professional challenges effectively.

The objectives of the Hadoop training programme are:

The course provides learners with practical information and the ability to handle real-world Hadoop issues. This skill improves their career chances by giving them a competitive advantage in the job market and helping with career advancement in the big data area.

The emphasis on real-world projects in the Hadoop training programme is crucial for providing participants with practical experience in applying Hadoop concepts to authentic scenarios. Through hands-on projects, participants gain invaluable exposure to industry-relevant challenges and develop the skills needed to address them effectively. This approach ensures that participants are well-prepared to tackle real-world Hadoop implementations and excel in their professional careers.

The Hadoop training programme is designed to accommodate participants with varying levels of experience. While prior knowledge of programming languages such as Java or Python and familiarity with basic concepts of data management may be beneficial, it is not mandatory. The training programme is structured to cater to beginners as well as experienced professionals looking to enhance their skills in big data analytics using Hadoop.

Throughout the Hadoop training programme, participants will have access to a variety of learning resources and support mechanisms to facilitate their learning journey. These resources may include comprehensive course materials, interactive lectures, hands-on labs, and real-world projects. Additionally, participants will receive guidance and mentorship from experienced trainers who are experts in the fields of big data analytics and Hadoop. Furthermore, regular assessments, feedback sessions, and dedicated support channels will be available to ensure participants receive the assistance they need to succeed in the programme.

1. Enhanced Employability: Acquiring Hadoop skills boosts your appeal to employers seeking Big Data expertise.

2. Lucrative Opportunities: Opens doors to high-paying roles like big data engineer and Hadoop developer.

3. Practical Experience: Gain hands-on experience tackling real-world data challenges.

4. Professional Growth: Mastering Hadoop concepts fast-tracks career advancement.

5. Industry Relevance: Stay abreast of the latest trends, ensuring long-term career viability in tech.

Our Job Assistance Programme offers you special guidance through the course curriculum and helps in your interview preparation.

Hadoop is a widely used big data framework that operates seamlessly across various computing platforms, from computers to mobile devices, without requiring frequent upgrades. It stands as one of the best career paths in the software development sector, with an average annual salary of 10 LPA.

Infibee’s placement guidance navigates you to your desired role in top organisations, ensuring you stand out and excel in every opportunity.

You need not worry about having missed a class. Our dedicated course coordinator will help them with anything and everything related to administration. The coordinator will arrange a session for the student with trainers in place of the missed one.

Yes, of course. You can contact our team at Infibee Technologies, and we will schedule a free demo or a conference call with our mentor for you.

We provide classroom, online, and self-based study material and recorded sessions for students based on their individual preferences.

Yes, all our trainers are industry professionals with extensive experience in their respective domains. They bring hands-on practical and real-world knowledge to the training sessions.

Yes, participants typically receive access to course materials, including recorded sessions, assignments, and additional resources, even after the training concludes.

We provide placement assistance to students, including resume building, interview preparation, and job placement support for a wide range of software courses.

Yes, we offer customisation of the syllabus for both individual candidates and corporate also.

Yes, we offer corporate training solutions. Companies can contact us for customised programmes tailored to their team’s needs.

Participants need a stable internet connection and a device (computer, laptop, or tablet) with the necessary software installed. Detailed technical requirements are provided upon enrollment.

In most cases, such requests can be accommodated. Participants can reach out to our support team to discuss their preferences and explore available options.

We offer courses that help you improve your skills and find a job at your dream organisations.

Courses that are designed to give you top-quality skills and knowledge.

Upgrade Your Skills & Empower Yourself